I thouroughly enjoyed the walk through Pedro's design process for his personal website. From where he drew inspiration, to live snapshots of the design's iterations.

To repeat the words of Chris Coyier again:

Redesigning your personal website is one of life’s great pleasures.

The latest edition of Matthias Ott's Own Your Web (which I recommend subscribing too!) points out that there are a lot of blogs out there, but they can be hard to discover. As a vessel to help others discover blogs, Matthias recommends curating a blogroll.

Blogrolls are great. I have one too! But I don't think they're enough. The visibility of a blogroll is limited to people that visit your blog and are curious enough to poke around. The content of a blogroll is limited to blogs you consistently follow, but individual posts are worth sharing too.

Lots of blogs do this: Chris Coyier occasionally shares links his thoughts intertwined, Freek's blog is a mix of original articles and links, and I've come across a lot of unexpectedly interesting articles through larger blogs like Daring Fireball or Kottke. Some have a separate RSS feed for sharing content, like Jim Nielsen's notes.

In the same edition of Own Your Web, Matthias shared a link to an article titled Curation is the last best hope of intelligent discourse . Joan Westenberg argues that with the rise of AI and algorithms, human curation is more important than ever.

Human curators can distinguish between nuanced arguments, recognise cultural subtleties, and evaluate the credibility of sources in ways that algorithms cannot. This human touch is essential for maintaining the integrity of our information ecosystem. It serves not only as a filter for quality but also as a signal for meaningful and trustworthy content amidst the overwhelming noise generated by AI systems.

Aside from its importance, an algorithm is not going to surprise you. I could listen to Spotify's Discover Weekly recommendations all day, but my taste wouldn't widen.

So, go forth and multiply content! Share what you find interesting, start a conversation, surprise your readers, and let the small web flourish!

Personal websites are often blogs these days: a chronological stream of thoughts, news, and articles. However, some content is worth more than a post stuck and lost in time.

If I need to publish content about an emerging API, I need a couple of things. I need reference documentation so that people who want to try it out understand how to use it. This reference is evergreen content, and I will update it as the API changes. It is helpful to have, right up front, information about the last time we updated the content and the version of the spec, or browser to use for testing. I also want to let people know that we’ve shipped this experiment, so I need a news post pointing to my reference material, explaining that this thing is here, and asking people to try it out and give us some feedback. I will not update the news post; what I might do, however, is write another news post when the spec and implementation changes to let people know the progress. These news posts are my paper trail.

Food for thought for my own site. I have a bunch of old articles I wish were more discoverable as pages outside of the "blog" format.

Jeremy Keith has an interesting take on how AI affects how we interact with search engines as content creators.

Previously, Google had a mutually beneficial agreement with websites: websites provided content, and Google brought traffic. Now, Google is using our content to generate and host their own.

It's been an odd few days with the changes on Reddit and Twitter – the only two major social media platforms I browse.

Platforms are great portals for discovery, but a guarantee for longevity is not their strong suit. And while the fediverse is interesting, my Mastodon experience feels more like a detox than something that stands on its own.

Read more

From Jeremy Keith:

Your app should work in a read-only mode without JavaScript.

Without JavaScript I should still be able to read my email in Gmail, even if you don’t let me compose, reply, or organise my messages.

I like this take on progressive enhancement. JavaScript is the language for interactivity on the web. Reading does not require two-way communication.

Google went down today. Downtime at this scale doesn't happen often, but when it rains, it pours. Google going down doesn't only affect Google products, it also affect products connected to Google. App that require authentication with your Google account weren't available, unless you were already logged in.

Coïncidentally, I came across a compelling article about local-first software. From a SaaS point of view, before the internet all we had local-first.

Read more

Today I read "Web Apps Are Not A Thing, Please Stop" by Robin Rendle, who says we should stop treating websites different from web apps.

I agree that web apps need to play by the same rules as website. However, I draw a different line: between building for the web and building on the web.

Read more

Last week, the W3C decided they were not going to use WordPress for their next website.

The decision making process behind this is happening in the open, on w3c.studio24.net. Accessibility was the driving force for the final choice between three contending CMS's (WordPress, Craft, and Statamic), but they did a lot of research before.

Read more

Two weeks ago I shared my thoughts and doubts about the JAMstack. Today I came across an article by Mike Riethmuller, who seems to have a lot more JAMstack experience under his belt than me. Most of the article resonated with me:

Despite my enthusiasm, I'm often disheartened by the steep complexity curve I typically encounter about halfway through a JAMstack project. Normally the first few weeks are incredibly liberating. It's easy to get started, there is good visible progress, everything feels lean and fast. Over time, as more features are added, the build steps become more complex, multiple APIs are added, and suddenly everything feels slow. In other words, the development experience begins to suffer.

[…]

The end result is we've outsourced the database, fragmented the content management experience and stitched together a bundle of compromises. That’s a stark contrast from the initial ease of setting up and deploying a JAMstack site.

Mike continues with a thoughtful analysis of the current state of the JAMstack, and intrudoces the idea of a "JAMstack Plus", essentially bringing the best parts of JAMstack and monoliths together.

I don’t think JAMstack should defined by pushing all the complexity into the front-end build process or by compromising on developer and user experience. Instead, I think JAMstack should focus on providing lean, configurable static front-ends.

That said, lean and configurable static front-ends are kind of a niche requirement in my world. While the JAMstack is a great tool in this space, I do believe it's in a hype phase.

Read the "The Rising Complexity of JAMstack Sites and How to Manage Them" on CSS-Tricks.

I very much enjoy building sites with static site generators like Hugo or Next.js. Static site generators provide a great developer experience, perform great out of the box, and simplifying DevOps makes me a happy camper.

In my experience, the JAMstack (JavaScript, APIs, and Markup) is great until is isn't. When the day comes that I need to add something dynamic–and that day always comes–I start scratching my head.

Read more

During his lightning talk at Full Stack EU, Bram Van Damme mentioned the rule of least power.

The rule of least power was described in an essay by Tim Berners-Lee back in 2006.

When designing computer systems, one is often faced with a choice between using a more or less powerful language for publishing information, for expressing constraints, or for solving some problem. This finding explores tradeoffs relating the choice of language to reusability of information. The "Rule of Least Power" suggests choosing the least powerful language suitable for a given purpose.

This is a useful principle to follow for the web because less powerful languages fail better.

Forgot a closing tag in HTML? There's a fair chance the browser will just fix it for you.

Did you write an invalid or unsupported CSS rule? The browser will ignore the statement and parse the rest as intended.

Got a syntax error in your JavaScript app? Prepare to watch the world burn.

Read the full essay on w3.org.

How much should we invest in JavaScript as developers? I've asked myself that question over and over again. Around last year I came to a conclusion: I strongly believe JavaScript is a requirement for excellent user experiences. Not good experiences, excellent experiences.

Read more

I first noticed webmentions in the wild on Hidde de Vries' blog about two years ago. Last week it finally happened, I added webmention support to my blog too! Well, partial support at least. I'm now receiving and displaying webmentions. Sending them out is a project for another day.

Read more

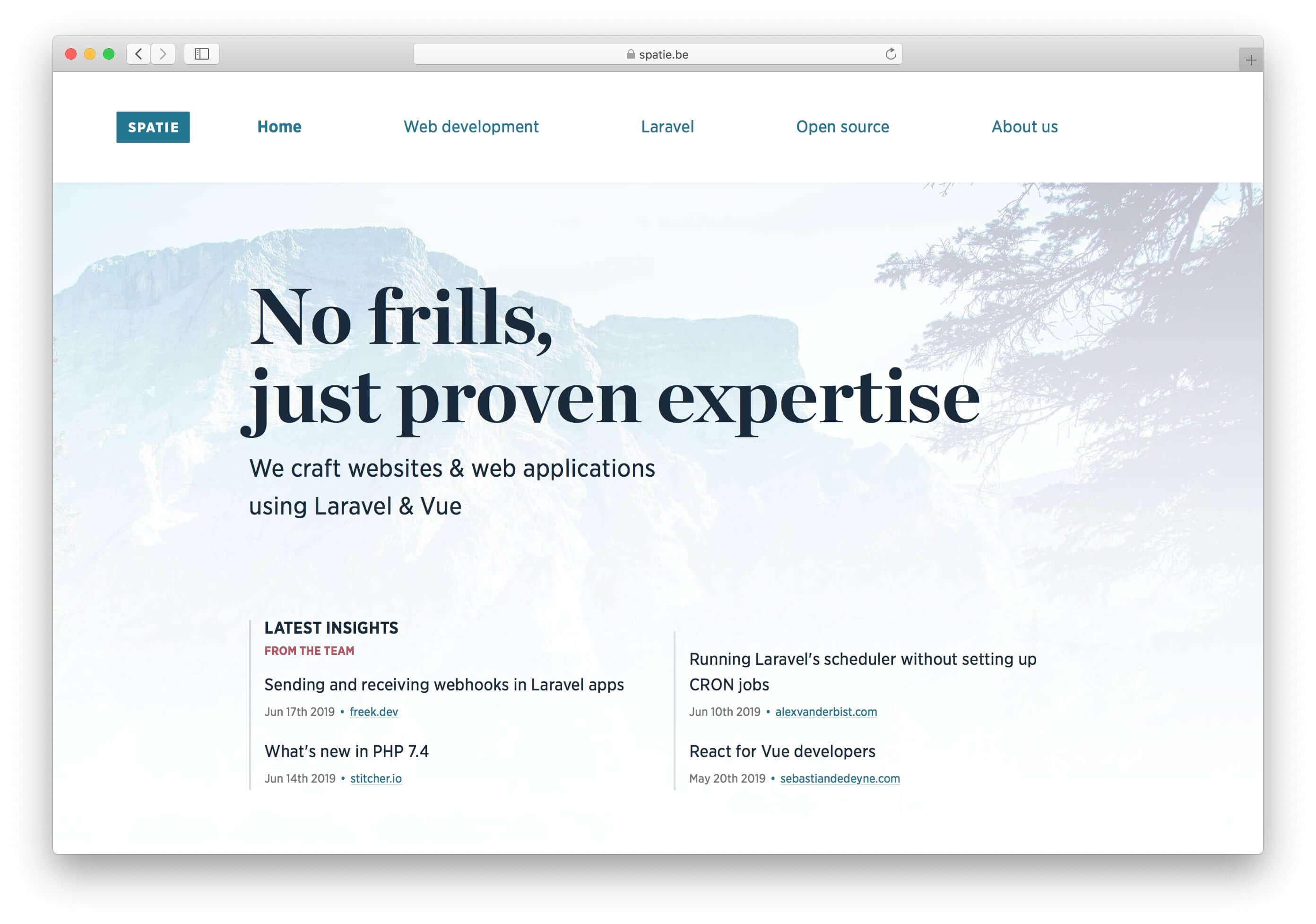

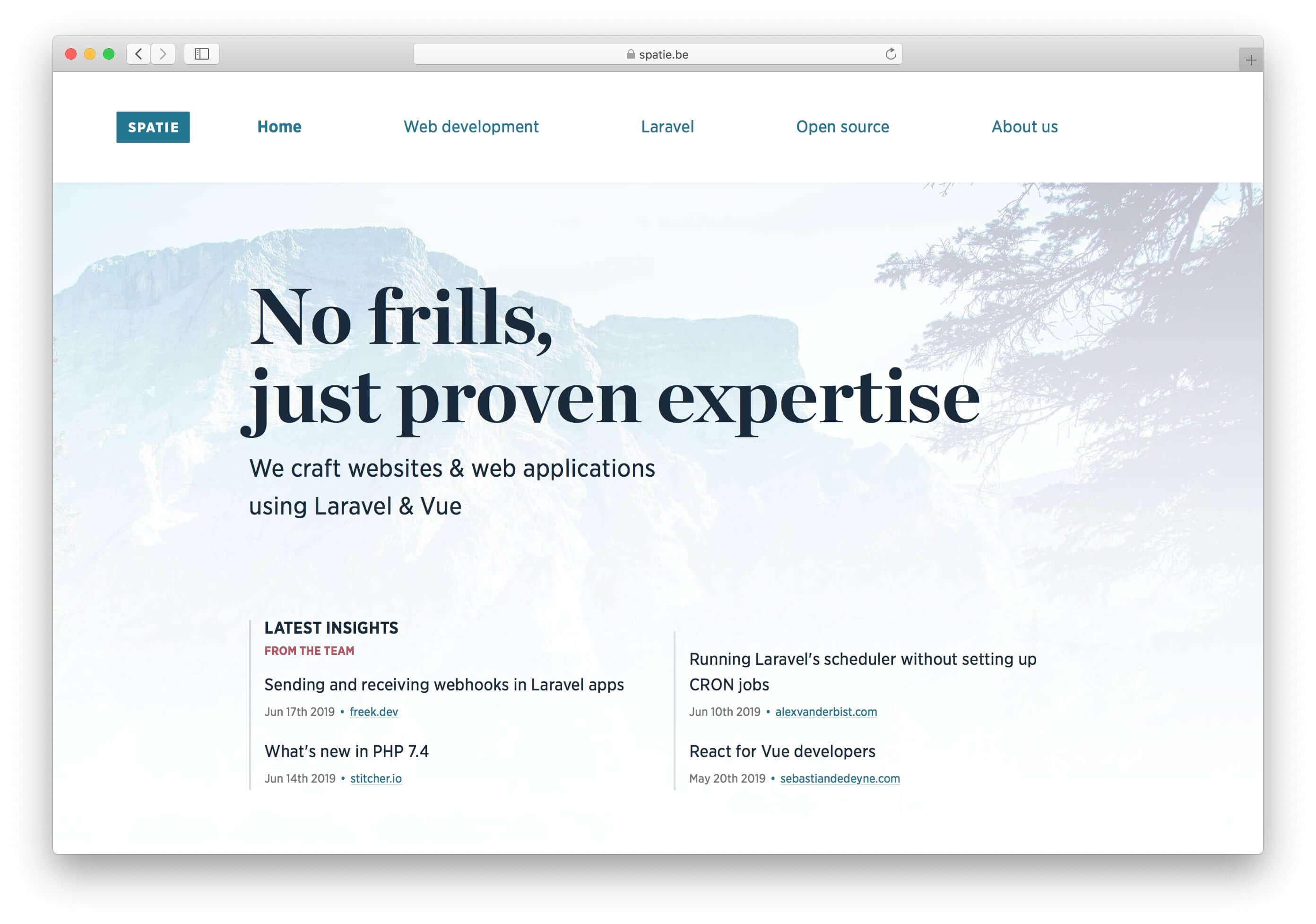

Some time last year, we released the latest iteration of the Spatie.be website.

There's a succinct description of what we're about, followed by a peculiar little block, dubbed "Latest insights from the team".

Unlike other agencies, we don't have a company blog. We encourage everyone to write on their own blog and put their latest articles in the spotlight.

Everyone keeps ownership of their content.

There's nothing fancy backing this feature, blog entries are synced via RSS. If you're interested in implementing something similar in PHP, our source code is available on GitHub.